Can Small Websites With Low Domain Authority Outrank Large Sites?

Get helpful updates in your inbox

Can Small Websites With Low Domain Authority Outrank Large Sites In Google?

With Google regularly announcing new core algorithm updates, one of the most common things I hear from independent web publishers is their concern that search results are becoming increasingly dominated by large websites. There is a fear that small or medium-sized will lose the ability to compete over time with behemoths like Wikipedia or fast-growing brands like Buzzfeed or Mashable.

Large sites obviously have a much higher domain authority — a composite industry measurement of website age, credibility, and size — than smaller websites. This begs the question, “can small websites with low domain authority outrank large sites?”

While we can always find examples of a small site appearing above a larger one in certain Google search results, most publishers believe that large websites are becoming an ever-more increasing staple at the top of all search results.

Below, I’ll highlight why I data shows this is likely not the case. Furthermore, I’ll share some details on why smaller sites might actually have an advantage over larger sites and how publishers can maximize this advantage to the best of their ability.

Watch me break this down on Ezoic Explains

Do large websites dominate Google search results?

While it may seem like large websites are always at the top of Google search results, the truth is that search results grow more and more diverse each year. This means that large sites lose more real estate to smaller sites, medium-sized sites, rich snippets, video content, and other types of search results every year.

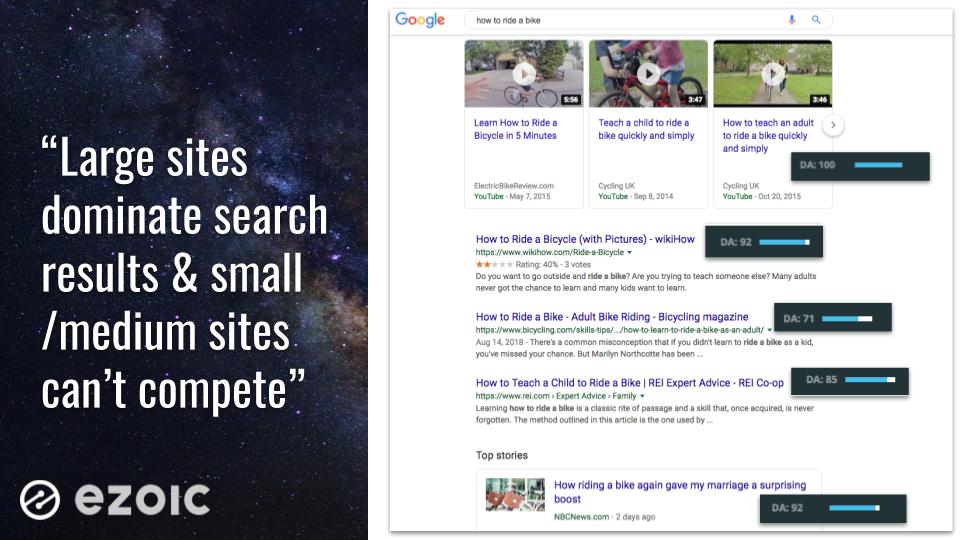

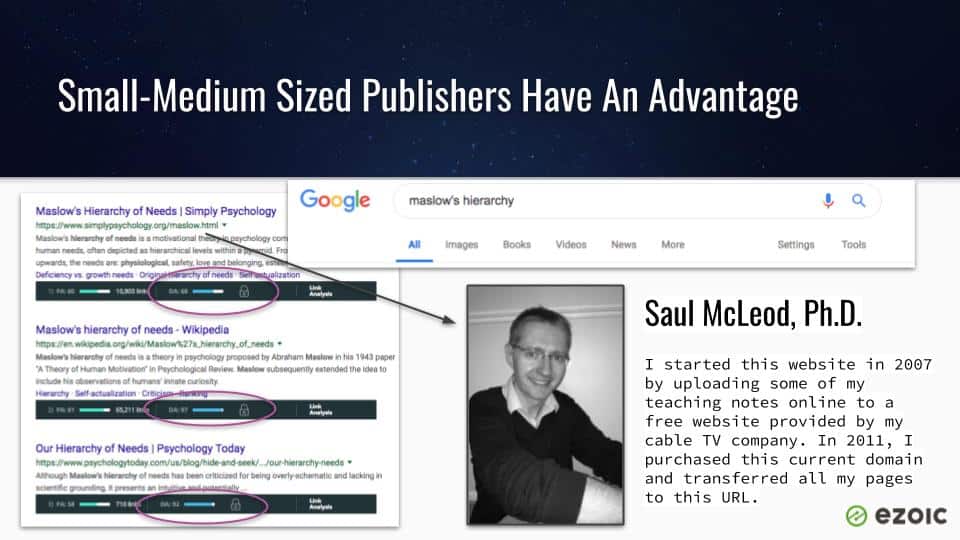

Confirmation bias is likely one of the reasons that many people inaccurately believe that larger websites are becoming increasingly more common at the top of Google search results. As we can see above, a simple search for a high volume query leads us to a search engine results page (SERP) filled with large websites with high Moz domain authority scores.

There are few reasons why it is common to see this, but one of the most obvious is that large sites rank highly for lots of keywords and it is probable that searcher sees that same site at the top of lots of different results.

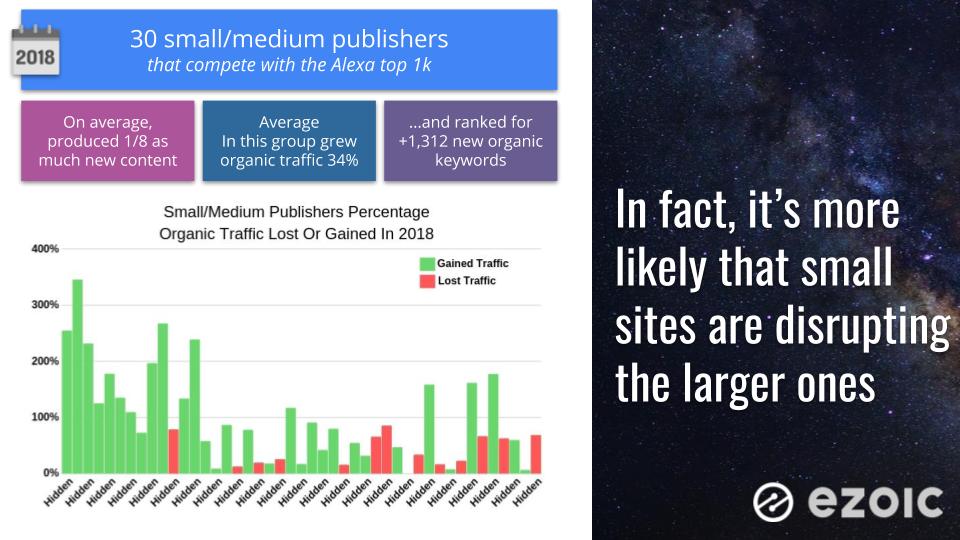

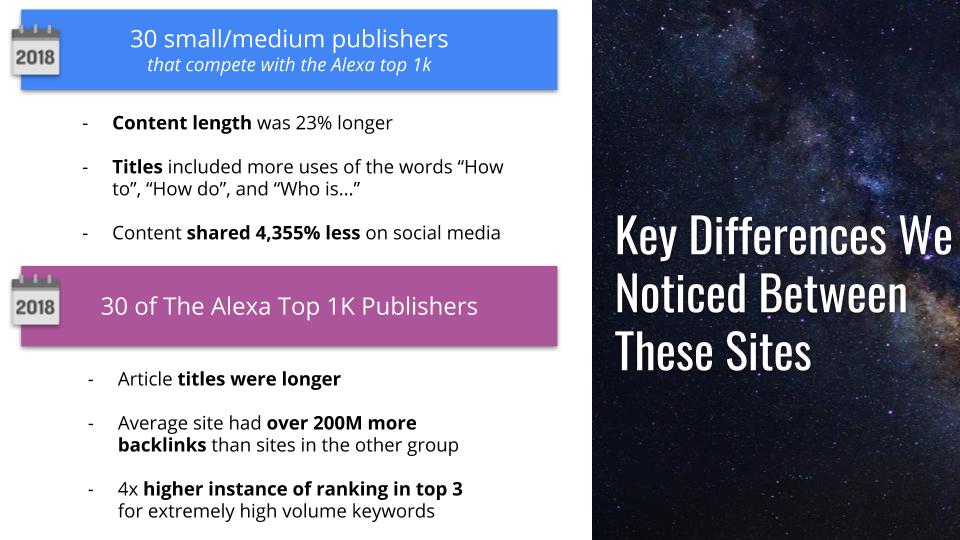

In 2018, small-medium-sized websites that directly competed with Alexa top 1,000 sites saw an average increase of 34% in organic traffic. What’s more, these sites produced about 1/8 as much new content while still adding over 1,312 new organic keywords over the course of the year.

In the results of the study we shared above, we found that Alexa top 1,000 publishers saw an average decline of 14% in their organic website traffic (primarily from Google). Less than 20% of these large publishers saw any growth at all. In fact, most saw their organic keyword rankings drop by approximately 26%.

This means that it is growingly likely that publishers with lower domain authority scores will continue to disrupt larger brands with more established domain and page authority

What is domain authority and how much does it matter?

Domain authority is a concept based around language inside one of Google’s original search patents. Famously, Google coined a phrase known as PageRank to describe its proprietary measurement of a website’s authority on a topic and credibility to rank that content accordingly. This expanded into the SEO industry as many early experts and SEO software companies used the patent filing to compile their own composite scores that included measurements of domain age, backlinks, existing content rankings, and more.

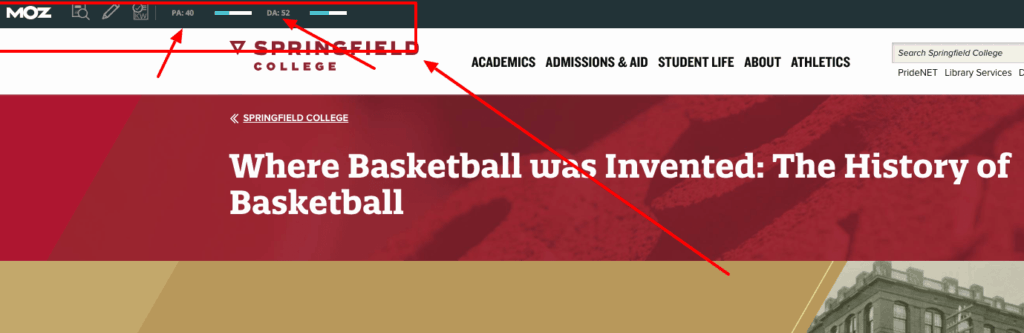

SEO software provider, Moz, has since established one of the most well-known and referenced domain authority scores. Web publishers can install the Moz Chrome extension for free and easily see domain and page authority scores — as calculated according to Moz’s particular methodology — in search results and on any web pages they are visiting.

It is very important to point out that Google does not share any scoring or domain authority criteria externally. 3rd parties, like Moz, are simple reverse-engineering their best guess at the types of things Google is looking at to establish domain authority and assigning an indexed score to that criteria.

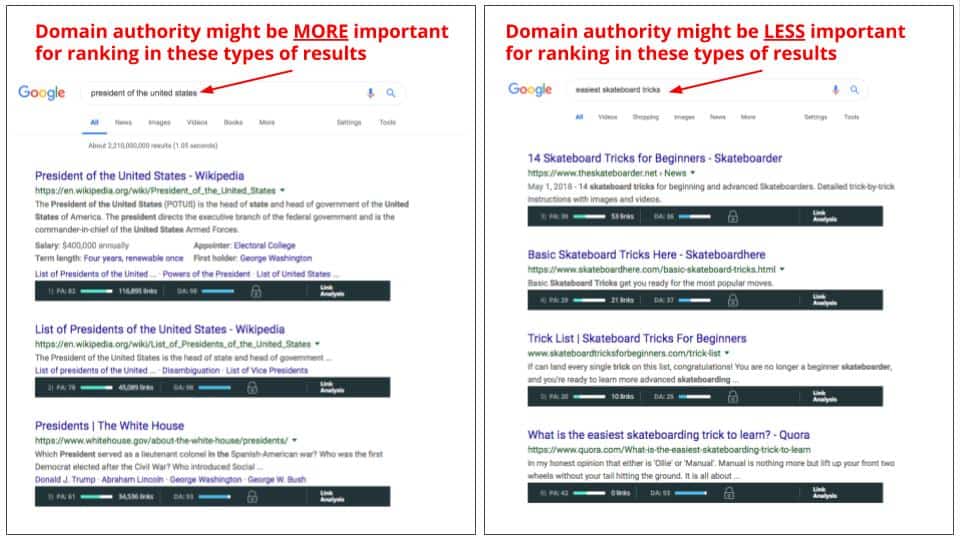

Moz’s domain authority score has no effect on actual website rankings in search results. Furthermore, the impact of domain and page authority of website rankings seems to vary quite a bit depending on the search query. There are a number of hypotheses’ on why it might work this way, but it seems like domain authority is not massively important for some search queries but might be a major factor for others.

We’ve talked about domain authority before and why it is a concept worth investing time and energy into improving; however, things like domain age, backlinks, and existing rankings are not things publishers can typically affect quickly.

How do sites with lower domain authority compete?

There are a couple of interesting takeaways from our study of large brand publishers that competed directly in results against smaller publishers. One of the biggest differences was in the content itself. On average, the smaller publishers generally wrote longer form content that dove a little deeper into specific topics. Additionally, many of the smaller publisher results included titles with the phrases “how to” or “who is”.

Large publishers had far more backlinks and included much longer titles for a lot of their content. Additionally, they often featured a lot of rich media like video, slideshows, and image galleries that contributed to the lower overall wordcounts.

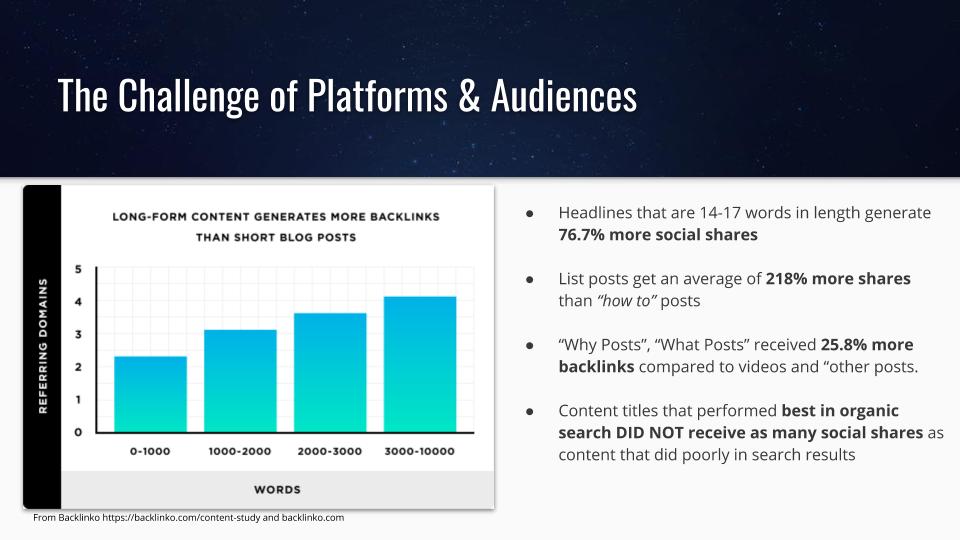

A recent study by Backlinko may help us better understand why these smaller publishers often have content that outranks the larger publishers. In the study, you can see that the longer more complex titles will often perform better on social media but not as well in Google search. Yet, the “how to” posts will often receive far more quality backlinks for specific posts (giving the small publishers a needed edge in an area they might normally struggle to compete against large publishers in).

Lastly, we can see that the types of titles that perform best on social platforms do not perform as well in organic search.

What does this have to do with large publishers having their organic search traffic disrupted by smaller publishers? Quite a lot actually.

Large publishers have a much broader audience and are often thinking about traffic diversity beyond Google search. They are trying to grow newsletters, logged in users, subscribers, social followers, and more. This means creating content that attracts audiences on a lot of platforms outside of search. This broad focus allows smaller publishers to focus on content that is designed and optimized for Google search.

This essentially gives small publishers a unique sort of advantage when it comes to competing for a ranking position in search results versus larger publishers.

What can publishers do with this information?

I think one of the most important things to take away from this data is that if you’re a small or medium-sized publisher, there’s no reason to believe that large publishers or other high-domain authority sites are going to push your site out of search results. In fact, it is more likely that Google itself will push your results off of the search page; rather than another publisher.

No matter if you’re a large publisher or a small one, there are probably a few things that the data tells us you can do to ensure that you maintain successful keyword rankings and organic traffic growth…

1.) Strategically create detailed and expertly-written content

Not every article has to be a 5,000-word deep dive into a topic or subject. However, your content is your most important asset in your efforts to rank higher in search results.

Is it the best result for the queries you’re targeting?

Is the title reflective of something that searchers would click on to answer their search query?

Is the content detailed enough to answer all forms of the question — and any additional long-tail iterations?

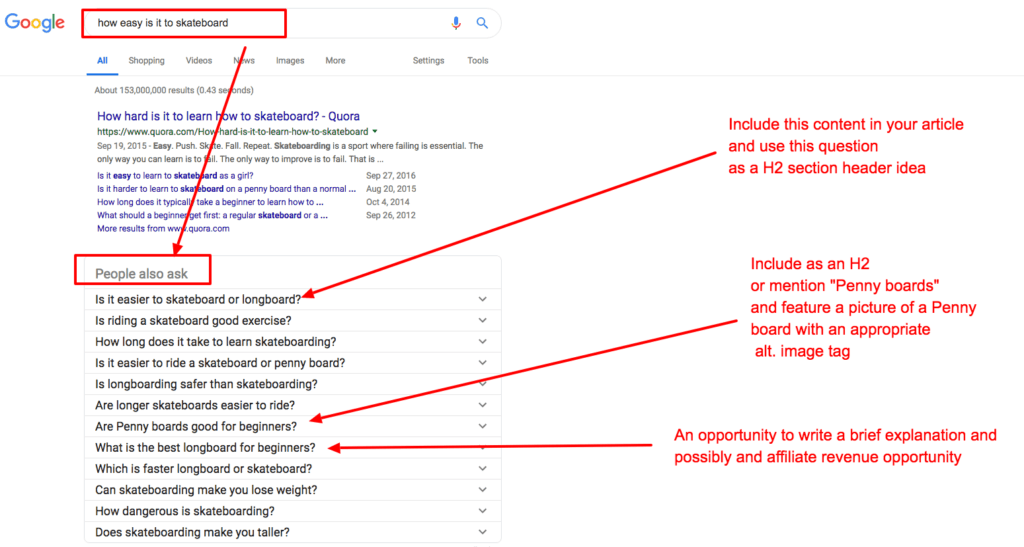

Typing in the query to Google search and reviewing the “people also ask” boxes is a great way to help formulate H2’s and additional paragraphs, images, and topics you may want to cover within an article. You may even find whole sections of content that could help you write a better article or uncover affiliate revenue opportunities.

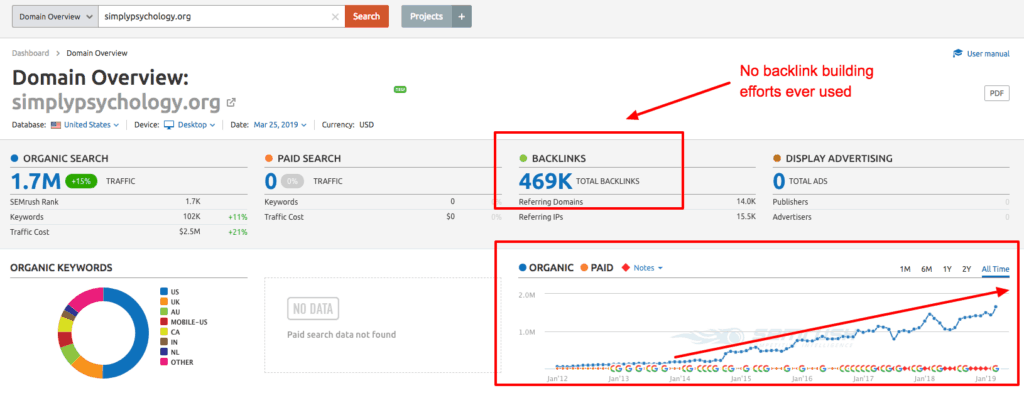

2.) Worry less about backlinks and domain authority

If there was one big takeaway from this study to me it was that backlinks continue to be overrated. That is not at all to say that they aren’t important for SEO. Backlinks are just particularly hard to gain and measure in a manner that is objectively healthy for your website.

While there are certainly publishers and webmasters that have built backlinks quickly and efficiently, one of the best ways to get ahead in search is by focusing on data-driven content production.

3.) Write content for your audience

Many of the larger publishers seem to struggle with maintaining a search-centric form of content production. This is often why the smaller publishers can build-out content that is a better fit for searchers. Thinking strategically can help you stay ahead of the game.

While this comes back to point #1 about maintaining a data-driven approach to content production, it is important to also keep in mind who you’re writing for. I don’t just mean the people reading, I mean the platform in which they’ll be discovering your content.

People using Google search are often seeking answers vs. looking for something interesting to read.

Someone looking for answers is much more likely to click on a title like, “How to properly give a cat a bath” than “9 ways you SHOULDN’T wash your cat” (as enticing as that might seem).

Why is it important to understand domain authority and rankings?

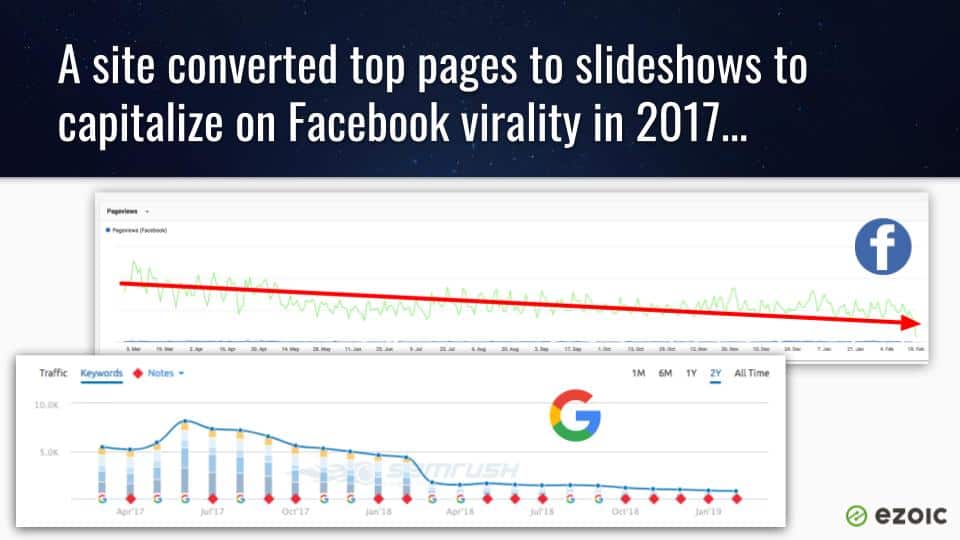

In 2017, I knew a popular online publisher that was convinced that Google was serving more and more top results to larger sites in their niche. They had specialized in digital marketing prior to owning several publishing properties of their own and were sure they needed to alter their strategy.

After two months of declines in organic traffic, they decided to convert all of their top organic landing pages to slideshows to better capitalize on growing Facebook traffic.

Since 2017, Facebook organic reach from publisher pages has declined an estimated 150%. This publisher watched organic traffic completely bottom out after the change. Meanwhile, Facebook traffic dwindled as reach was pulled back by the platform.

This publisher ultimately saw their revenue reduced to 1/6 of what it was prior to the change. Their decision was based on a faulty premise and it cost them dearly.

On the other side of the spectrum, independent publisher Saul McLeod, from SimplyPsychology.org, was a lifelong psychologist and university professor. He started a website with little knowledge of online publishing and uploaded his expertly-written articles on psychology to his site over time. Over that time period, Saul’s site has grown exponentially and commonly outranks sites like Wikipedia and other major brand publications for high volume search queries.

Will smaller sites always be able to outrank larger ones?

That is hard to say for sure. So far, Google has trended towards indexing a larger and larger number of sites every year. This means more potential results overall. Additionally, this has paralleled a trend in which results have continued to diversify away from a small number of large sites gobbling up all of Google’s organic search traffic.

This actually has forced some large publishers to question whether or not Google may be capping the amount of traffic they are willing to send some websites.

No matter what kind of publisher you are, the data above is more representative of what types of content Google is looking for than what types of sites it is looking position in search results. Publishers that build content for search are the ones most likely to continue benefiting. This means writing content for people using the search engines, not the search engines themselves.

Questions, thoughts, additionals comments? Leave them below and I’ll chime in.

Tyler is an award-winning digital marketer, founder of Pubtelligence, CMO of Ezoic, SEO speaker, successful start-up founder, and well-known publishing industry personality.

Featured Content

Checkout this popular and trending content

Ranking In Universal Search Results: Video Is The Secret

See how Flickify can become the ultimate SEO hack for sites missing out on rankings because of a lack of video.

Announcement

Ezoic Edge: The Fastest Way To Load Pages. Period.

Ezoic announces an industry-first edge content delivery network for websites and creators; bringing the fastest pages on the web to Ezoic publishers.

Launch

Ezoic Unveils New Enterprise Program: Empowering Creators to Scale and Succeed

Ezoic recently announced a higher level designed for publishers that have reached that ultimate stage of growth. See what it means for Ezoic users.

Announcement