How To Solve Helpful Content Update Issues Using One Simple Strategy

Get helpful updates in your inbox

Google has a helpful content update rolling out, and lots of publishers are justifiably nervous about how it might affect their site. What happens if the Google update has a negative effect on their site’s traffic?

I’ve spent a lot of time analyzing and assessing this, and I want to share with you some of the most important behaviors and strategies a publisher can do to ensure their site not only survives but thrives on this new Google helpful content update.

First, we need to understand the real problems publishers are facing, and the real identity of who Google is.

Keep in mind, although Google is truly a gigantic organization, there’s actually very little cooperation across teams, especially the Search team (the team that manages and maintains their search engine algorithm). Why? Google is rightfully very particular about the legal consequences of the Search team interacting with other Google team members. The Search team is literally the department that manages Google’s AI and search algorithm, the algorithm that dictates the organic traffic for 87% of all search engine queries globally. Google doesn’t want that team accidentally sharing secrets, or even unintentionally giving people false ideas about the Search team’s logic and procedures.

This comes with its own disadvantages, though. Five years ago, Google believed that organic search rankings would be 100% machine-driven. Unfortunately, these machine-driven algorithmic processes have led to an influx of SEO templated content published by content creators trying to appease what they think Google wants, while greatly diminishing user experience through cookie-cutter templates users abhor.

Instead of trying to come up with the latest SEO templates espoused by SEO “experts,” you need only focus on one major, overarching goal: create really good content your audience really likes.

Let’s talk about providing essential information. Something we’ve noticed at Ezoic that is supported by Google’s marketing and messaging is that users bouncing off a search results page and disappearing is largely a positive in their eyes for the majority of queries. Google sees users that spend excess time on sites and search results pages as a huge negative. If a user needs to spend a lot of time sorting through useless data and unrelated content, it signals a subpar site that is underserving of more traffic.

See, sites and publishers will never see things the way Google does. While publishers become obsessed with SEO and keywords and h2 headers, Google pays super close attention to other, less obvious search result metrics like user result times, number of consecutive searches, related, topic queries, and return to results page.

Essentially, most sites worrying about UX metrics are basically wasting their time. What we observed at Ezoic (and later confirmed by Google) is that session duration and bounce rate often being “poor” (longer sessions and lower bounce rate) is essentially reflective of a site providing non-essential info. From Google’s perspective, it’s a good sign that a user has potentially shorter sessions on a site, and a faster bounce rate, which can signify a user quickly found exactly what they were looking for — no sifting through unrelated data or navigating a confusing maze of SEO templates.

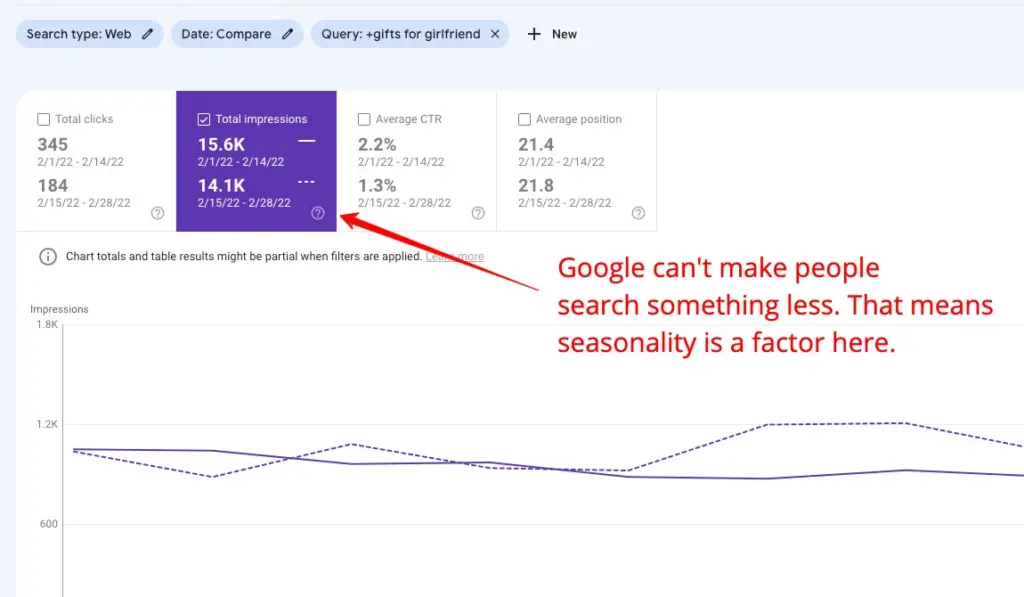

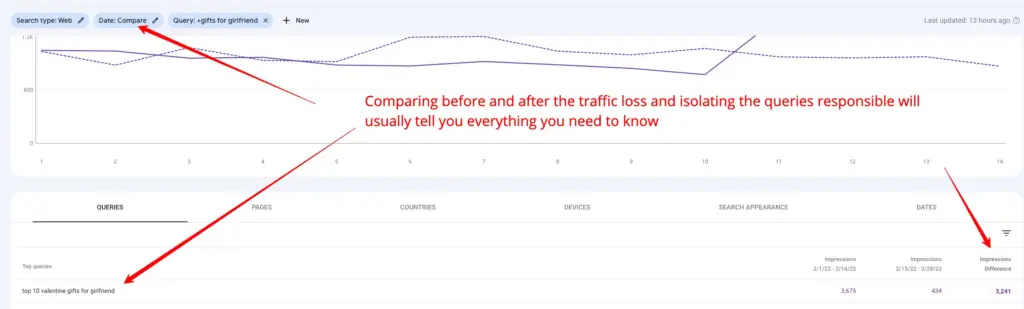

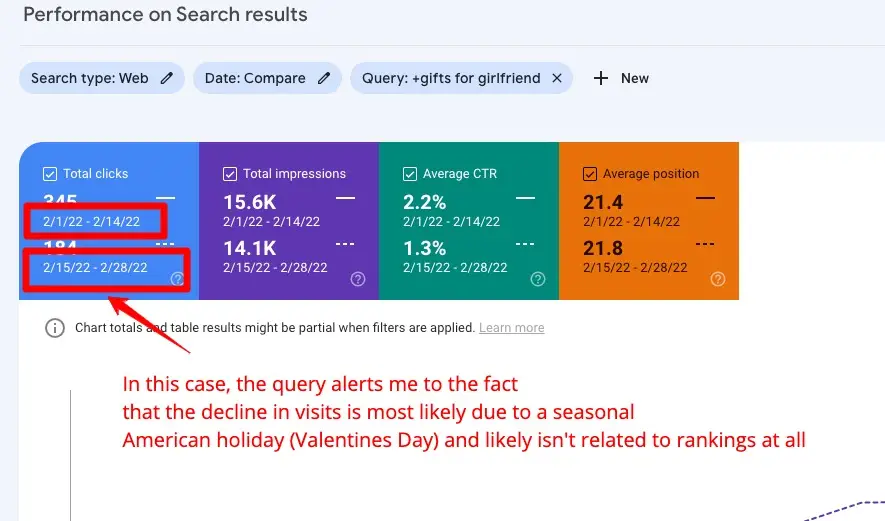

This quick tutorial of how to use Google’s Search Console to identify why organic traffic goes up or down on a website will help:

See, Google is aiming to de-emphasize sites that are trying to shotgun broad topic keywords into lengthy articles when the user is seeking an answer to a query that may only need 500 words or less. This is next-level content creation, where the publisher has perfected the art of giving their audience the exact content needed. The content isn’t fluffed up, excessive, or unnecessarily long for perceived SEO importance — it’s just excellent content that accomplishes precisely what it aims to.

There’s plenty of Google data to back this up. For instance, many of you may know that a hack for SEO for the last few years has been entity-related keyword association — essentially using Google’s public Google Trends data to hack and discover all associated terms and topics for a keyword to “over-cover” a topic in attempts of generating more organic traffic (i.e. “Arnold Schwarzenegger,” “California Governor,” “Predator,” etc.). This was not known by many but the technique of over covering topics became an espoused technique by SEO folks that didn’t understand the underlying mechanism and often substituted their own silly ideas as to why this occurred.

The result? Subpar content that users greatly disliked. Upon meeting this flood of low-quality content in rankings that emphasize overly broad and long articles, users instead often entered search queries answers like “is Arnold Schwarzenegger alive REDDIT”; which they saw as a means of finding answers elsewhere due to Google providing poor experiences.

This helpful content update is designed to reward sites that provide succinct and user-oriented answers.

Another wrinkle to this conversation is the advent of AI-written content, a massive trend that’s just begun to take off. Publishers want to know how artificial intelligence could help their site, and how that kind of content relates to SEO.

Consider this as why AI cannot write the content that Google wants to rank.

Let’s say you search for “how to stop crying baby”. AI can write an article about “why babies cry,” which is probably just a bulleted list that could easily be a rich result. Publishers aren’t needed for that. But, HOW to stop a crying baby is content best provided by someone with expertise and AI cannot provide this direct and human info — and if it did — Google would certainly not want to rank it; you would want that information from someone who understands babies. I don’t have any major life experience with babies crying, but my mother does. I’m sure I cried a lot as a baby; thus, if we were both writing articles on the topic Google would want to understand the difference between my faux expertise and her actual expertise, and would naturally want to rank content by someone like her higher than mine, or AI.

Distinguishing the expertise in my example above is a far cry from what people think of as SEO today. AI content doesn’t need to be identified. It simply can’t produce this content and will stick out like a sore thumb. If the query can be answered by AI writers, Google can (and will) do that themselves and intends to provide those answers directly in rich results. With queries where expertise is actually needed, looking at backlinks and entity-relationships doesn’t really seem to matter near as much.

So how do you measure expertise if you don’t consider backlinks or natural language processing to be that useful?

User behavior and pattern recognition seems to be the answer.

Google sought to understand what users that were satisfied did when they were seeking answers. They measured that behavior and experience and tried to understand how it varied across all the different types of queries that emerge everyday. Then, Google rebuilt rank brain to understand what these patterns looked like and how to objectively understand when new articles might enable to deliver better results.

This means that Google will likely emphasize these unannounced metrics and patterns when testing and ranking pages now in the future.

This all sounds like a lot, and it is. You’ll notice no one in SEO is talking about this in these terms; that’s really important to note because you can pretty much ignore anyone trying to act like a scientist on this stuff when they’re offering what amounts to conspiracy theories. I’m sure there will be no end to the stupid advice espoused then next few months from people who simply do not understand the problem.

If you’re a publisher or content creator, there’s no “recovery” from a Google update other than to go back in time and make content people want. The metrics and information Google is using now was collected for over a year and there’s been zero communication on details if new data will be collected and rolled in as data accrues in real-time. What’s more, that data is absolutely unavailable in any way to publishers.

The way I see it, there are two things Google intends this helpful content update to do:

- Make SEO worthless to try; Google hopes these changes will prove to be impossible to effectively impact since they can’t be measured or understood in any objective manner.

- Force sites to provide answers based on an understanding of their audience and their own expertise.

This also directly impacts any publishers that run affiliate businesses or hire writers to produce content for their site. The truth is, Google thinks that is part of the problem: sites containing content from writers that are not experts in that field. Google would hope sites only use these methodologies in cases where they provide a fair value or level of expertise that is unavailable without them.

If any of this scares you, keep in mind that the web is largely not in a state to deliver this ideal experience Google imagines today. So, the disruption that is foretold will likely only occur in pockets at first and the ability to adapt will be equal for everyone.

Tyler is an award-winning digital marketer, founder of Pubtelligence, CMO of Ezoic, SEO speaker, successful start-up founder, and well-known publishing industry personality.

Featured Content

Checkout this popular and trending content

Ranking In Universal Search Results: Video Is The Secret

See how Flickify can become the ultimate SEO hack for sites missing out on rankings because of a lack of video.

Announcement

Ezoic Edge: The Fastest Way To Load Pages. Period.

Ezoic announces an industry-first edge content delivery network for websites and creators; bringing the fastest pages on the web to Ezoic publishers.

Launch

Ezoic Unveils New Enterprise Program: Empowering Creators to Scale and Succeed

Ezoic recently announced a higher level designed for publishers that have reached that ultimate stage of growth. See what it means for Ezoic users.

Announcement