Google Webmaster Guidance On Building High-Quality Sites

Get helpful updates in your inbox

John Mueller of Google is referenced often in the world of SEO. Recently on a Google Webmaster Hangout, Mueller was asked what to do if a site is suffering a traffic loss due to Google’s June 2019 Core Algorithm update. Mueller’s answer was in line with the all-too-familiar vagueness surrounding Google’s updates. He said there aren’t specific things to fix. But…

Mueller mentioned that although there’s nothing explicit you can do, there’s an older post from Amit Singhal which covers a lot of questions you can ask yourself about the quality of your website. Mueller was citing the 2011 Webmaster Central blog post titled, More guidance on building high-quality sites.

The blog centers around the “Panda” algorithm update, named after Google engineer Navneet Panda, who developed the technology that made it possible to implement specific quality elements into the search algorithm. Despite this Webmaster Central blog being older, Mueller still pointed publishers to this post as a good reference for those who are concerned about the quality of their content.

Below, I will take you through the most important takeaways from the blog to understand why the information in this blog is still relevant to your site today.

Would you trust the information presented in this article?

How does a publisher know if their content is worth being trusted or not? We wrote a blog on how to quickly add authority to your YMYL site, but the advice still applies to all sites as well. Here is a summary of the key points that factor into the relative trust of your website from Google’s perspective

- Use citations to point Google’s crawler to where you got the information

- Evaluate your content holistically regarding any claims you’re making

- Disclose third-party relationships

- Create internal linking between your content on similar topics

- Ensure you have an author bio and link to any credentials you may have within the bio

Does the site have duplicate or redundant articles on the same or similar topics with slightly different keyword variations?

This question targets sites who are writing a lot of the same content. If you are saying the same thing over and over with slight keyword variations, Google sees this as content being written just for search engines.

You want to ensure that you are creating content that is truly valuable to the end-user, where one page has distinctive information from the next.

IMPORTANT: People get confused about ‘duplicate content’. Google is not penalizing publishers for having duplicate content. They are not going to de-rank two pages because they have the same keywords. What they are worried about in this case is that each page is individually valuable, not that they are optimized for the same keyword(s).

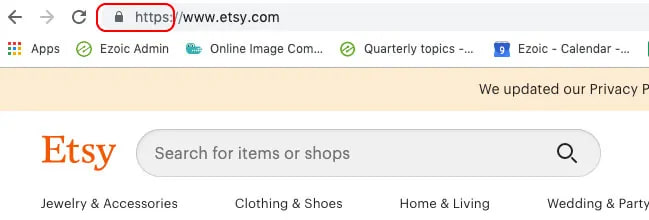

Would you be comfortable giving your credit card information to this site?

Even if you’re a publisher and you’re not taking anyone’s credit cards, this word of advice is still valuable. Google may be doing broad security checks across all sites, because if people enter any information on your site, they most likely want it to be secure.

SSL encryption is the best way to go about that. If Google were to do a security check, Etsy would pass because of its SSL encryption.

Does this article have spelling, stylistic, or factual errors?

There are many ways to check grammar, syntax, or fact-check content on your site. All of these can be done online.

Grammarly is a great tool to spell-check your content and ensure proper grammar. They also have a Chrome extension version of the app available. Yoast SEO has the Flesh reading ease test built into its plugin, and it’s helpful to monitor your writing and adjust accordingly.

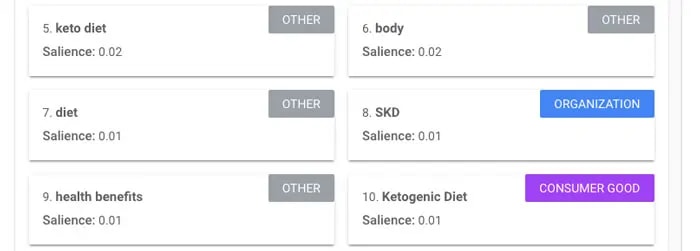

Google’s natural language tool can help make sense of the entities, syntax, sentiment, and categories within your content from the perspective of how Googlebot crawls your site.

For fact-checking, it’s important to cite where you’re getting your information from, and if it’s a topic of importance in Google’s eyes (YMYL), then it’s even more important to demonstrate to Google that your content is credible because you did your research.

Are the topics driven by genuine interests of readers of the site, or does the site generate content by attempting to guess what might rank well in search engines?

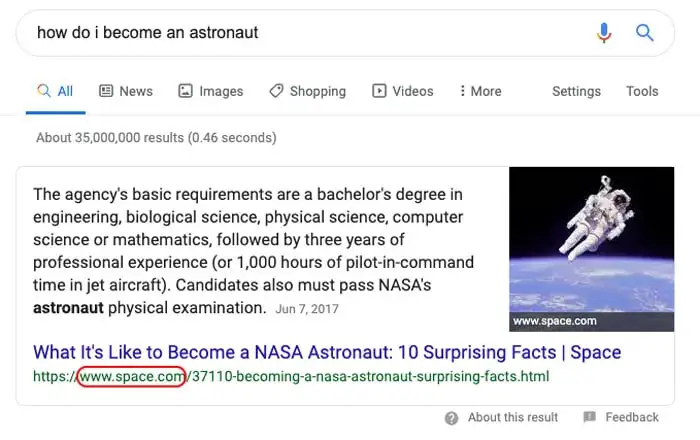

Topics that are driven by genuine interests of readers is a subjective measure, but what it boils down to is whether or not you are providing information that’s valuable to the readers. Let’s take a look at the Google search results for “How do I become an astronaut?”

The top result for this query is space.com. This site ranks ahead of nasa.gov’s #2 and #3 results that cover astronaut requirements and details on the candidate program.

Farther down on the SERP (search engine results page), space.com has more articles that rank on the first page of Google. Why is this example important? Because space.com’s top result for this query doesn’t necessarily mean the publisher optimized for people that searched this.

As a publisher, you can do both. You can write articles on topics that garner genuine interest from readers and simultaneously optimize your content for search engine results.

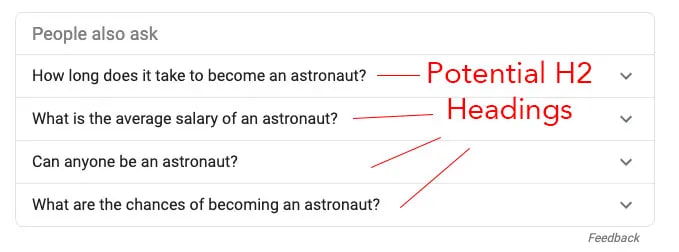

A great place to start is our content writing guide. If you own a site that publishes content around the topic of outer space, you can refer to the “People also ask” section of the search results to identify popular search queries on Google and assist in structuring your content.

These potential H2 headings can serve as guides to the questions you can answer within your content that will engage the person seeking out the information, while also structuring your content to be crawlable to Google.

Some of the answers to these search questions are unlikely to be answered by a traditional authority on the topic, like nasa.gov. This knowledge puts you in the position to provide these answers while optimizing your content for search at the same time.

Does the article provide original content or information, original reporting, original research, or original analysis?

Anything you can create that is original content is going to be better off in the eyes of Google compared to sites that don’t. If you’re using a lot of images, content, and studies from other sites, what is it that you are providing that isn’t just a commodity?

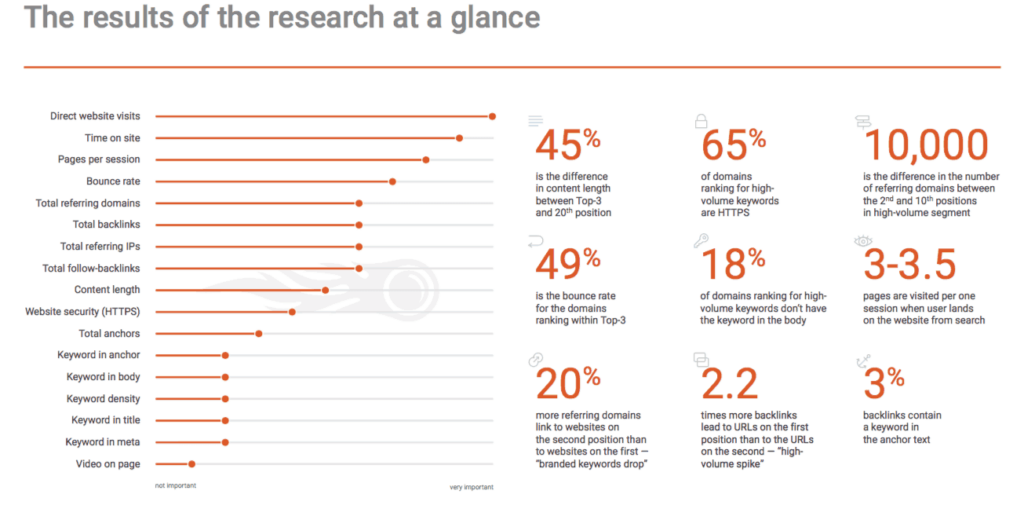

Original information, reporting, research, and analysis are individual elements that, when combined, add to complete the whole picture of distinct content. Our study on how the June Google Core Update affected digital publishers is an excellent resource to fine-tune your content to keep up to date with Google’s frequent and often ambiguous changes.

How much quality control is done on content?

If you allow user-generated content or comments on your site, it is a best practice to monitor the comments and user posts on your content. Bad words and incorrect spelling might hurt how your site is crawled by Google if you don’t moderate user-generated content.

Does the article describe both sides of a story, and is my site an authority on its topic?

When you’re presenting information on YMYL (Your Money, Your Life) topics, it’s important to remember that Google determines the authority of these topics based on a number of ranking factors.

If your site is not a traditional authority on the topic (.gov, .edu, etc.) then ask yourself, “Does my content present both sides of a topic objectively, or am I presenting the information as fact without context to where I gained the information?”

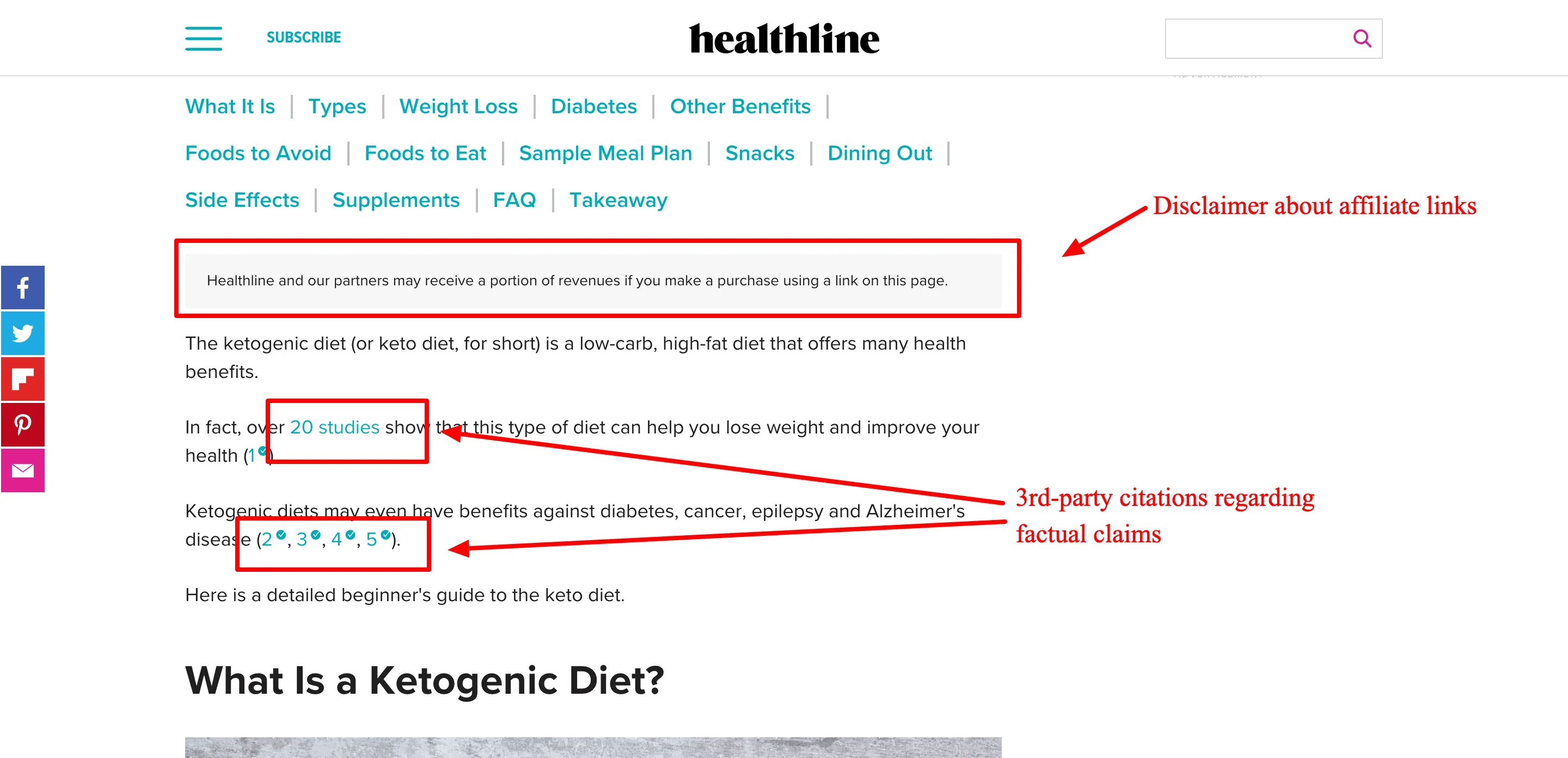

This article from Healthline demonstrates a site that has done its due diligence to cite their sources when making factual claims. Googlebot crawls your site and follows links (if they are set to follow) and can also make sense of your content using Google’s natural language tool.

The crawler can tell whether your site is truly about outer space, to use an earlier example. It can also see how much of your content encompasses the same topic and whether the author has published similar content on the site and elsewhere.

Is the content mass-produced by or outsourced to a large number of creators, or spread across a large network of sites, so that individual pages or sites don’t get as much attention or care?

The essence of this guideline is that Google is looking at if you are leveraging a PBN or outsourcing all your writing to various writers. A PBN is a private blog network where all people involved agree to backlink each other’s content. The main purpose of this method is to cheat how Google measures site authority and subsequently increase, typically, the ‘money site’s’ ranking.

The drawback to these networks is that Google is extremely skilled at identifying and taking them down. In September of 2018, Google sent out widespread manual action notices via Google Webmaster Tools to these sites for “thin content” spam and “unnatural links”.

Then again, if you’re a publisher and you’re using a ton of outsourced writers, what kind of quality controls do you really have in place to make sure your content is good in the first place?

Each publisher has to make the decision with their own content. And while “content is king” is a phrase used frequently within this space, there are effective ways to determine whether what you’re publishing is average content or quality content.

Is this the sort of page you’d want to bookmark, share with a friend, or recommend?

One of the biggest identifiers Google looks at is direct traffic. The amount of people that go straight to your website without searching for it is one of the top-ranking signals. Social, direct, and backlinks to your site show Google that this is content people want.

Although traffic from Facebook, Twitter, and other social media are set to no-follow, social signals are still cues that Google looks at to identify whether the content is useful to the end-user, along with the other factors mentioned above. They want to see people coming to your content from places other than search.

EXAMPLE: Imagine you are a publisher that writes about skincare. The logic behind this ranking signal is that if many people who find your article on Acne prevention subsequently copy and paste the URL to a friend in a message, or share it on Facebook, Twitter, etc., that conveys to Google that your content has sharability. These factors signal Googlebot that the content is good content if direct traffic is higher.

Would users complain when they see pages from this site?

This measure is also subjective, but it’s a good thing to think about when building and maintaining your site. As of July, Googlebot now uses a mobile-first index when it crawls sites. This means that whatever version of mobile you are showing users, this is what Google sees as well.

If your site is not properly optimized for mobile, your site will suffer in comparison to those who are optimized. Here’s a quick checklist to save you optimization errors that have the potential to cost you traffic and money.

- Your menu and navigation are mobile-friendly

- There’s no content overrunning

- No ads taking up a majority of the viewport in one location

- Images are sized properly for mobile or open in a new tab when clicked

- No overly lengthy, long blocks of text

You want to ensure that when visitors land, there aren’t any elements of your site they immediately see as red flags and return to the SERP for a better result.

While I didn’t cover every single guideline, you can read the full guidance on building high-quality sites blog post from Google. You can use this explanation of the major takeaways from the blog post as a launching pad to re-evaluate your content and ensure a high-quality site for your visitors.

If you have any comments or questions, I’ll try to answer them below.

Allen is a published author and accomplished digital marketer. The author of two separate novels, Allen is a developing marketer with a deep understanding of the online publishing landscape. Allen currently serves as Ezoic's head of content and works directly with publishers and industry partners to bring emerging news and stories to Ezoic publishers.

Featured Content

Checkout this popular and trending content

Ranking In Universal Search Results: Video Is The Secret

See how Flickify can become the ultimate SEO hack for sites missing out on rankings because of a lack of video.

Announcement

Ezoic Edge: The Fastest Way To Load Pages. Period.

Ezoic announces an industry-first edge content delivery network for websites and creators; bringing the fastest pages on the web to Ezoic publishers.

Launch

Ezoic Unveils New Enterprise Program: Empowering Creators to Scale and Succeed

Ezoic recently announced a higher level designed for publishers that have reached that ultimate stage of growth. See what it means for Ezoic users.

Announcement